Meta Rolls Out Four New In-House AI Chips to Break Nvidia Grip

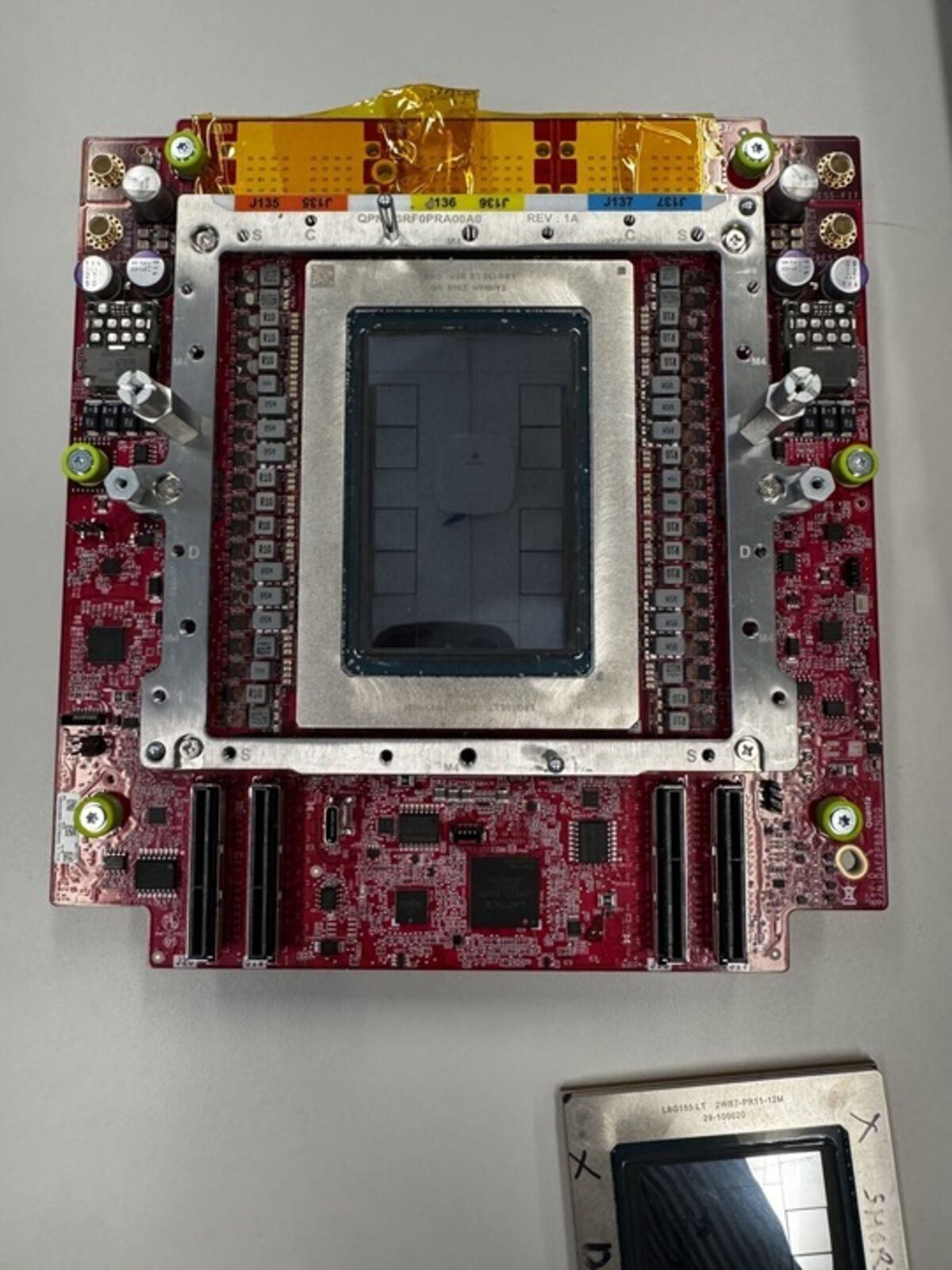

Image: Bloomberg AI

Main Takeaway

Meta's MTIA 300-500 series chips target training and inference workloads, aiming to cut GPU costs and boost performance for recommendation and generative-AI systems.

Summary

Meta’s Four-Chip Roadmap

Meta Platforms has sketched a straight-line plan for four new custom accelerators—MTIA 300, 400, 450, and 500—that will slot into its data-center racks between now and the end of 2027. The first of the set, MTIA 300, is already in limited production, according to Yahoo Finance and Longbridge. MTIA 400 is “about to be deployed,” while 450 and 500 are still on the drawing board, with tape-out and mass production targeted for 2027.

Each chip is tuned for different slices of Meta’s workload. The 300 handles light training and large-scale inference for ranking Instagram Reels and Facebook feed posts. The 400 bumps up on-chip memory bandwidth to feed larger recommendation models. The 450 and 500, still under wraps, are expected to tackle generative-AI training clusters and will ship alongside new liquid-cooled server trays, Bloomberg reports.

Why Build When GPUs Exist?

Meta’s CFO, Susan Li, told attendees at a Bloomberg-sponsored tech conference that “some of our workloads really are very customized to us.” Translation: off-the-shelf Nvidia H100s and AMD MI300s are great for general-purpose training, but Meta’s ranking models use sparse embeddings and memory-access patterns that general GPUs don’t handle efficiently. Custom silicon lets Meta sand down power draw and latency while keeping the architecture tightly coupled to its PyTorch-based software stack.

The company still plans to spend billions on Nvidia and AMD gear—no one is ripping GPUs out of racks tomorrow. Instead, Meta wants a portfolio play: buy merchant silicon for peak flexibility, deploy in-house chips for predictable, high-volume jobs, and pocket the margin that normally goes to Santa Clara.

Competitive Ripples

Nvidia and AMD lose monopoly pricing leverage when hyperscalers roll their own. Yahoo Finance and Marketwatch both flagged the move as an escalation in the long-simmering “coopetition” between cloud giants and GPU vendors. For now, the risk to Nvidia is moderate—Meta still needs Hopper and Blackwell GPUs for frontier-model training—but every rack of MTIA silicon is a rack that doesn’t carry a 70 percent gross margin for Jensen Huang.

Google, Amazon, and Microsoft have similar programs (TPU, Trainium, and Maia, respectively). Meta’s late start means it must prove its chips can match Nvidia’s software ecosystem. The company is leaning on the open-source community—PyTorch 2.5 will ship with first-class MTIA back-end support later this year, according to Wired AI.

Supply-Chain Reality Check

Meta is fabless; the chips are being fabbed at TSMC on a 5-nanometer node with CoWoS advanced packaging, per Longbridge. Lead times remain brutal: new masks for the 450 and 500 won’t even hit the foundry until Q1 2027, implying risk of slippage if demand spikes or geopolitical tensions flare. The company has also tapped Broadcom and Marvell for SerDes IP and PCIe Gen 6 controllers, according to two sources familiar with the matter.

What Happens Next

Expect MTIA 400 to appear in Meta’s new Gallatin data-center campus in Tennessee by late 2026. If utilization meets internal KPIs—latency under 3 ms for 95th-percentile recommendation queries—Meta will scale the program aggressively. CFO Li hinted that future generations could move to TSMC’s 3-nm node and add HBM4 memory stacks, pushing total addressable workload from 15 percent of inference today to “north of 50 percent” by 2028.

Wall Street reaction has been muted. Meta’s stock barely moved on the announcement, suggesting investors see this as a long-term cost play rather than an immediate revenue catalyst. Still, any slip in Nvidia’s October earnings guidance could be read as hyperscaler defection risk—Meta included.

Bottom Line

Meta isn’t trying to kill Nvidia; it’s trying to haggle. The four-chip MTIA roadmap gives the company credible leverage in next year’s GPU negotiations while shaving operating costs off its most predictable workloads. Whether the silicon actually outperforms merchant GPUs is almost beside the point—having an alternative in the rack is the win.

Key Points

Meta’s four-chip MTIA roadmap runs from 2026 through 2027, starting with MTIA 300 already in production.

Custom accelerators target Meta-specific workloads—recommendation ranking and generative-AI inference—rather than general-purpose training.

The initiative reduces dependence on Nvidia/AMD GPUs and provides leverage in future procurement negotiations.

TSMC 5-nm fabrication and advanced packaging keep Meta fabless, but 2027 tape-outs face long lead-time risk.

PyTorch integration and open-source tooling aim to close the software-gap with Nvidia’s CUDA ecosystem.

FAQs

They’ll primarily power Meta’s recommendation systems (Instagram Reels, Facebook feed) and later-stage generative-AI inference, not large frontier-model training.

No. Meta will continue to buy Nvidia and AMD GPUs for flexible, peak-demand training; MTIA chips handle predictable, high-volume inference.

TSMC is producing them on its 5-nanometer node with CoWoS advanced packaging; Broadcom and Marvell supply SerDes and PCIe IP.

MTIA 450 and 500 are scheduled for mass production by the end of 2027, assuming no foundry delays.

Meta’s effort is similar to Google’s TPU or AWS Trainium/Inferentia—custom silicon to cut costs and optimize internal workloads—but Meta is starting several years later.

Unlikely. Meta is keeping the architecture in-house; only PyTorch open-source support is planned, with no public cloud offering announced.

Source Reliability

45% of sources are trusted · Avg reliability: 74

Go deeper with Organic Intel

Our AI for Your Business systems give you practical, step-by-step guides based on stories like this.

Explore ai for your business systems