Google Photos Turns Your Camera Roll Into Cher's Clueless Closet

Image: Google AI Blog

Main Takeaway

Google Photos launches AI Wardrobe, letting users virtually try on clothes they already own using existing photos in their gallery.

Jump to Key PointsSummary

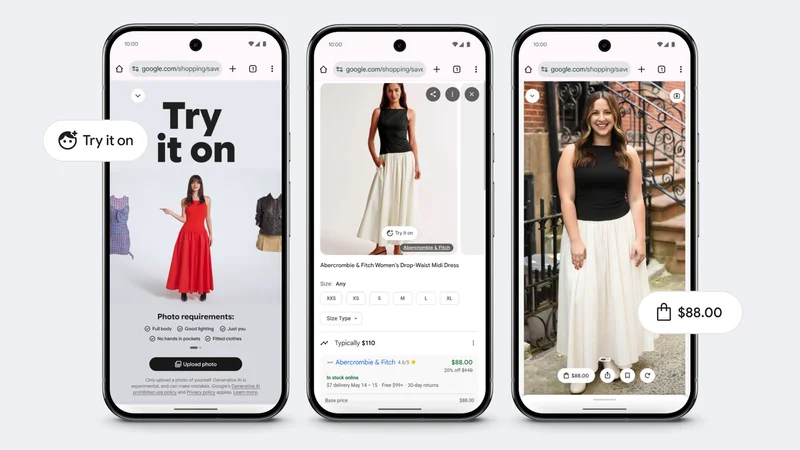

How the new Wardrobe feature works

Google Photos now scans your existing photos to build a digital closet called Wardrobe. The system identifies clothing items from your gallery photos and creates a searchable virtual inventory. Users can then mix and match these pieces to create new outfit combinations without physically trying anything on. The feature uses Google's Gemini 2.5 Flash Image model (codenamed Nano Banana) to generate realistic virtual try-ons based on your existing clothes, according to Google's official announcement on April 29th.

The process is surprisingly simple. Google Photos analyzes your photo library to automatically detect and catalog clothing items you've worn in past photos. These pieces get organized into a digital wardrobe interface where you can browse everything you own virtually. When you want to try on an outfit, the AI generates a realistic preview of how the combination would look on you, using either an existing full-body photo or creating one from a simple selfie.

Why this matters for fashion tech

This represents a fundamental shift from shopping-focused AI try-on tools to wardrobe management. While Google's existing Shopping try-on feature helps users visualize new purchases, Wardrobe focuses on maximizing what you already own. It's the first major tech company to apply AI try-on technology to existing wardrobes at scale.

The timing feels right. Consumers are increasingly conscious about sustainable fashion and maximizing existing clothing. By making it easier to visualize new combinations from existing pieces, Google could reduce unnecessary purchases while helping users feel more confident in their daily outfit choices.

Technical capabilities and limitations

The system requires surprisingly little input. Unlike early virtual try-on tools that needed professional photos or full-body shots, Wardrobe works with regular smartphone photos. Google's Nano Banana model fills in gaps, generating realistic body proportions and clothing fit from casual snapshots.

However, early testing suggests some rough edges. Android Police's March 2026 hands-on with Google's try-on tools found them "clunky" and prone to generating unrealistic results. The accuracy depends heavily on photo quality and lighting conditions in your existing photos. Items photographed in poor lighting or from awkward angles may not render correctly in virtual combinations.

Privacy implications of clothing AI

Your wardrobe data becomes part of Google's broader understanding of your personal style and preferences. The company can potentially use this information to improve shopping recommendations, targeted advertising, and even predict fashion trends based on aggregate user data.

Users should understand that their clothing choices, style evolution, and outfit preferences become part of Google's AI training data. While Google states this data stays within your personal account, the company's track record with user data raises questions about long-term privacy protections for such intimate personal information.

What this means for competitors

Amazon, Meta, and Apple have all experimented with virtual try-on technology, but none have integrated existing wardrobe management at this scale. Amazon's Echo Look attempted similar functionality but discontinued the hardware approach. Meta's fashion AI focuses on new purchases through Instagram Shopping.

Google's advantage lies in Photos' massive user base and existing photo library access. Competitors will likely rush to develop similar features, particularly Apple with its Photos app and Amazon with renewed fashion AI efforts. The race is now on to build the most comprehensive digital wardrobe experience.

The impact on fashion and retail

This could fundamentally change how people approach personal style. When users can instantly visualize any combination from their existing wardrobe, it may reduce impulse purchases while increasing satisfaction with owned clothing. Retailers might see decreased returns as customers better understand how new items fit their existing style.

Fashion brands should pay attention. The feature creates a new touchpoint where users interact with their products long after purchase. Brands might need to optimize how their clothing photographs for better AI recognition, similar to how they adapted for social media visibility.

What happens next

Google plans to roll out Wardrobe gradually, starting with U.S. users in the coming weeks. International expansion will follow based on user feedback and technical performance. The company hints at future integrations with Google Shopping, potentially suggesting new purchases that complement your existing wardrobe.

Expect rapid iteration. Google will likely add features like outfit planning for specific events, weather-based recommendations, and integration with calendar apps. Third-party developers may eventually access wardrobe APIs for styling apps or fashion services, creating an ecosystem around Google's digital closet infrastructure.

How to get started

Wardrobe appears as a new tab in Google Photos. Users can opt-in to let the AI scan their photo library for clothing items. The system walks you through a quick setup process, identifying key pieces and asking you to confirm or reject its findings.

Currently, there's no manual upload option for clothing items. Everything must come from existing photos in your Google Photos library. Users concerned about privacy can selectively exclude photos or entire albums from the wardrobe analysis through the app's privacy settings.

Key Points

Google Photos now creates virtual wardrobe from existing photos, letting users try on clothes they already own

Uses Gemini 2.5 Flash Image model (Nano Banana) to generate realistic outfit combinations from photo library

Works with casual smartphone photos, no need for professional shots or full-body images

First major tech company to apply AI try-on to existing wardrobes at consumer scale

Privacy implications include detailed style data becoming part of Google's user profile

Questions Answered

No, the system automatically scans your existing Google Photos library to identify and catalog clothing items you've worn in past photos.

Currently no, the feature only works with clothing items it can identify from photos already in your Google Photos library.

Google states the data stays within your personal account, but your style preferences and clothing choices may inform broader Google services and recommendations.

Accuracy varies based on photo quality and lighting. Early testing shows some limitations with poor lighting or awkward angles, but results improve with better source photos.

Yes, users can selectively exclude photos or entire albums from wardrobe analysis through Google Photos privacy settings.

Google plans international expansion after the initial U.S. rollout, but no specific timeline has been announced.

Source Reliability

44% of sources are trusted · Avg reliability: 71

Go deeper with Organic Intel

Simple AI systems for your life, work, and business. Each one includes copyable prompts, guides, and downloadable resources.

Explore Systems