Google Drops Gemma 4: Open Multimodal AI That Runs on Your Phone

Image: Ai.google

Main Takeaway

Google's Gemma 4 brings frontier multimodal AI to devices with Apache 2.0 licensing, enabling offline AI across phones, laptops, and servers.

Summary

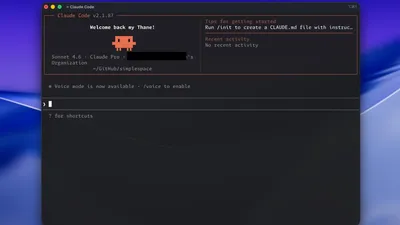

The open model that thinks on your device

Google just released Gemma 4, the first major update to its open-weight AI models in over a year. Unlike the Gemini models locked behind Google's APIs, Gemma 4 runs locally on everything from cloud servers to Raspberry Pi devices. The family includes multiple variants optimized for different use cases, from the 270M parameter model for lightweight tasks to larger configurations handling complex reasoning.

What changed with the license

Google switched Gemma 4 from its previous restrictive terms to Apache 2.0, making it truly open source. This means developers can modify, distribute, and commercialize the models without Google's approval. According to ZDNet, this addresses a major pain point where previous versions had usage restrictions that made enterprise adoption tricky.

Why this matters for open source

The timing is strategic. While Meta's Llama models dominate open-source AI discussions, Google was losing mindshare among developers who wanted genuinely open alternatives. Gemma 4's Apache 2.0 license puts it in direct competition with Llama 3.x, but with Google's research pedigree behind it. Hugging Face notes the models achieve "pareto frontier arena scores," suggesting they punch above their weight class for their size.

The multimodal edge

Gemma 4 handles text, images, and audio natively across 140+ languages with context windows up to 256K tokens. This isn't just academic capability - it enables applications like real-time image analysis on phones without cloud connectivity. Google DeepMind emphasizes this as "frontier intelligence for mobile and IoT devices," a market where OpenAI and Anthropic have no presence.

What happens next

Expect rapid adoption among developers building privacy-focused applications. The combination of open licensing, on-device capability, and multimodal features creates opportunities for offline AI assistants, local content moderation, and edge computing applications that weren't practical before. Google also announced ShieldGemma 2 for safety filtering, suggesting they're building an ecosystem rather than just releasing models.

Key Points

Gemma 4 switches to Apache 2.0 license, making it truly open source for commercial use

Runs locally on everything from cloud servers to Raspberry Pi and mobile phones

Multimodal capabilities handle text, images, and audio across 140+ languages

Available in multiple sizes from 270M parameters up to larger variants

Represents Google's strategic response to Meta's dominance in open-source AI

FAQs

Gemini is Google's proprietary model accessed via cloud APIs, while Gemma 4 is open-source and designed to run locally on your own hardware without internet connectivity.

Yes, the Apache 2.0 license allows unrestricted commercial use, modification, and distribution without Google's permission or licensing fees.

Anything from high-end servers to laptops, phones, and even Raspberry Pi devices, with different model sizes optimized for different hardware constraints.

No, it's designed for offline operation once downloaded, making it suitable for privacy-sensitive applications or environments with poor connectivity.

Source Reliability

54% of sources are highly trusted · Avg reliability: 78

Go deeper with Organic Intel

Our AI for Your Work systems give you practical, step-by-step guides based on stories like this.

Explore ai for your work systems