Meta Gives Employees an AI Mark Zuckerberg Clone for Internal Chats

Image: Ars Technica AI

Main Takeaway

Meta trains photorealistic AI avatar of CEO Mark Zuckerberg to answer employee questions and may soon let creators build their own digital doubles.

Summary

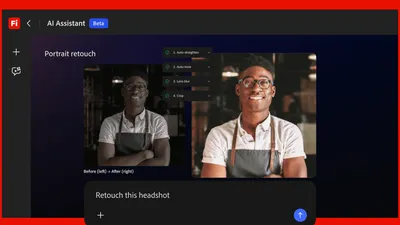

How the AI Zuckerberg works

The avatar is built inside Meta’s newly formed Superintelligence Labs and trained on thousands of hours of Zuckerberg’s recorded meetings, public earnings calls, and internal “ask-me-anything” videos. Engineers feed the model not only the words he uses but also the pauses, hand gestures, and vocal inflections he tends to emphasize when making product decisions. The system renders a high-resolution 3-D face that syncs lip movement with generated speech in under 150 milliseconds to avoid the uncanny lag that plagued earlier celebrity bots, according to people who have seen internal demos. Voice quality is reinforced by last year’s acquisitions of PlayAI and WaveForms, whose models compress Zuckerberg’s voiceprint into a 128-kilobit neural codec that can be streamed at low latency across Meta’s internal network.

Why employees are being nudged to use it

Meta’s HR team has quietly made the avatar part of an internal pilot program in which product managers and engineers can schedule 15-minute “Z office hours” with the AI. The stated goal, according to an internal FAQ reviewed by the Financial Times, is to let staff feel “closer to the founder” without Zuckerberg spending every afternoon in Q&A sessions. Some employees have used the clone to get quick feedback on feature ideas; others have asked it how Zuckerberg might prioritize competing roadmaps. Participation is voluntary, yet the company is simultaneously running a “skills baseline exercise” that rates staff on how well they integrate Meta’s open-source agent framework OpenClaw into their workflows, leading to quiet speculation that refusing the AI could flag someone as less “AI-native.”

Safety and optics risks

The same architecture that lets the avatar mimic Zuckerberg’s mannerisms also makes it a juicy target for deep-fake misuse. Meta has restricted the bot to the corporate VPN, watermarked every generated frame, and requires a second employee to approve any recording or screen-share. Even so, several staff told the Financial Times they worry a rogue prompt could coax the AI into statements that sound like official strategy or leaked financial guidance. Outside critics point to last year’s fiasco in which user-created AI characters turned sexual and ask whether an internal avatar could be jail-broken into similarly embarrassing territory. Meta insists the model is sandboxed, but the company’s own history of teen safety lapses looms large.

Creator economy implications

If the pilot succeeds, Meta plans to open the same pipeline to Instagram and Facebook creators who want a 24/7 digital twin. Early mock-ups show influencers uploading 30 minutes of selfie video and receiving an avatar that can live-stream product drops or answer fan questions while they sleep. Revenue would flow from branded partnerships placed inside those AI conversations, with Meta taking a cut similar to its existing Stars tipping system. The technical hurdle remains compute cost: rendering a photorealistic avatar currently requires two H100 GPUs for every concurrent user, so Meta is experimenting with lower-resolution fallback modes that activate when demand spikes.

Zuckerberg’s deeper AI obsession

People close to the CEO say he personally reviews every weekly training loss curve for the avatar and spends five to ten hours coding on adjacent projects, including a separate “CEO agent” that drafts slide decks and writes performance reviews in his tone. That agent is distinct from the avatar; one is text-only and lives in Meta’s internal productivity suite, while the other is the animated face employees can chat with. The bifurcation hints at a future in which executives maintain an entire constellation of AI selves: a strategist agent, a public-facing avatar, and perhaps even a board-meeting bot. Zuckerberg’s hands-on involvement also signals that Meta views these tools not as side projects but as core infrastructure for running a 70,000-person company.

What happens next

Meta will decide by late summer whether to graduate the avatar from pilot to standard internal tool. A green light would likely coincide with the public launch of AI Studio 2.0 at Connect 2026, where creators could begin generating their own doubles. Meanwhile, expect enterprise software vendors such as Microsoft and Google to fast-track competing offerings that let any CEO spin up a photorealistic stand-in. The long-term question is whether staff will trust strategic guidance from an AI that is, by design, indistinguishable from the real founder. If engagement stays high, Meta could expand the program to include AI versions of other senior leaders, effectively creating a parallel executive layer that never takes a day off.

Key Points

Meta has built a photorealistic AI avatar of Mark Zuckerberg trained on his voice, image, and mannerisms for internal employee Q&A.

Employees can book 15-minute sessions with the avatar; participation is voluntary but tied to company-wide AI skills assessments.

The system runs on Meta’s Superintelligence Labs infrastructure and uses voice tech from recent acquisitions PlayAI and WaveForms.

Meta plans to offer the same avatar pipeline to Instagram and Facebook creators if the internal pilot succeeds.

A separate text-only “CEO agent” drafts slides and reviews in Zuckerberg’s style, indicating a multi-AI executive future.

FAQs

No. It supplements them. Staff can opt to chat with the avatar for quick feedback, but leadership says it won’t eliminate human check-ins.

All sessions stay on the corporate VPN, every frame is watermarked, and a second employee must approve any recording or screen-share.

Meta says late 2026 if the internal pilot proves engagement is high and compute costs drop enough.

Not directly, but concurrent AI-skills exercises are being tracked, so avoiding the tech could be noticed.

Roughly two H100 GPUs per concurrent user for full photorealism; fallback low-res modes use less.

Source Reliability

47% of sources are trusted · Avg reliability: 70

Go deeper with Organic Intel

Our AI for Your Business systems give you practical, step-by-step guides based on stories like this.

Explore ai for your business systems