Google's Search Live Goes Global: 200+ Countries Get AI Search Camera

Image: Google AI Blog

Main Takeaway

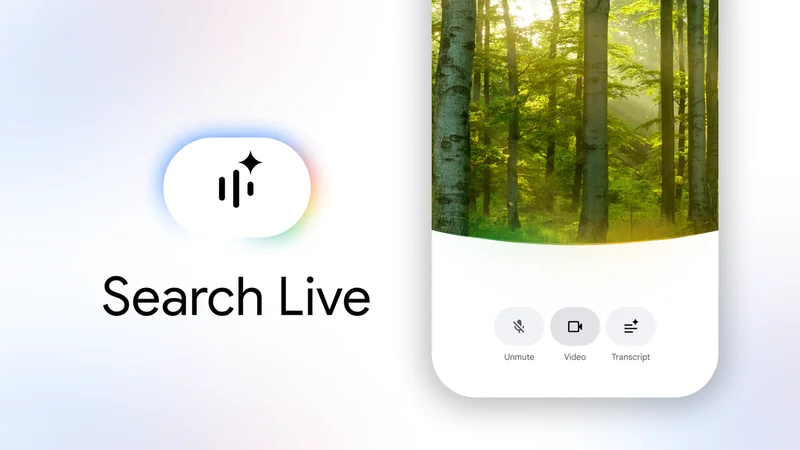

Google's multimodal Search Live expands from US-only to 200+ countries, letting users point phone cameras and ask questions in any supported language.

Summary

What exactly is Google rolling out?

Google is pushing Search Live — the camera-and-voice powered AI search mode — to every country and territory where AI Mode already works, according to the company’s own AI Blog. That covers more than 200 markets, up from the previous US-only rollout last September. The expansion pairs Search Live with the new Gemini 3.1 Flash Live model, letting users point a phone at an object or scene and ask questions in dozens of languages. In parallel, Google Translate’s headphone-based live translation is also leaving beta and landing on iOS for the first time, supporting 70-plus languages with speaker tone preserved.

How does Search Live actually work?

Think of it as Google Lens crossed with a chatbot. Open the Google app, tap the camera icon, and start talking. The system overlays answers directly on whatever the lens sees — price checks on wine bottles, plant care tips, or tourist info on landmarks. Under the hood, Gemini 3.1 Flash Live handles multimodal reasoning: it fuses visual, audio and text inputs into a single context, so you can ask follow-ups like "how tall is that building?" without restating what you're looking at. The same backbone now powers real-time audio translation piped straight to any headphones, wired or wireless.

Why is this happening now?

Two pressures converged. First, Google’s AI Mode chat search is finally stable enough for global traffic after months of US and India testing. Second, rivals are racing to own the "visual search" moment: OpenAI’s ChatGPT-4o already offers live camera Q&A, and Perplexity just shipped a similar feature. By bundling Search Live with Translate’s headphone trick, Google turns two separate products into a single global moat: ask about what you see, hear the answer in any language, all on-device. It’s a classic bundling play to keep users inside Google’s ecosystem.

Which languages and countries are included?

Google hasn’t published an exact list, but says "all languages and locations where AI Mode is available" plus 70-plus languages for headphone translation. Early access pages show France, Germany, Italy, Japan, Spain and Thailand among the newly supported regions. Android and iOS users get parity, ending the historical Pixel-first delay. The catch: countries where AI Mode is blocked — think China, Iran, and parts of the EU still wrestling with the AI Act — remain excluded for now.

What does this mean for developers and businesses?

Local SEO just got messier. Restaurants, stores and tourist spots will now surface in AI-generated spoken answers triggered by a camera scan, not just typed queries. Expect a land grab for structured data markup and high-quality product photos. Developers building camera-centric apps should watch Google’s Search Console for new impression categories tied to "Live visual search". Meanwhile, any brand with physical presence needs to optimize for Gemini’s visual understanding: clear signage, consistent branding, and multilingual alt text on images.

Are there privacy or latency concerns?

All processing happens on-device for translation, Google says, so your voice or camera feed never leaves the phone. Search Live queries route through Google’s cloud for the heavy multimodal reasoning, with typical latency under two seconds on modern devices. Still, the sheer volume of camera data heading to Google’s servers raises the usual red flags. Users can disable Search Live in app settings, and EU residents retain GDPR deletion rights, but the opt-out is buried three menus deep.

What happens next?

Expect rapid iteration. Google is already testing "agentic" follow-up actions inside Search Live — things like booking a table when you scan a restaurant or adding scanned products to a shopping list. The headphone translation beta will likely graduate to full release within months, and pressure will mount for offline mode. Competitors won’t sit still: Perplexity and OpenAI will push similar global rollouts, while Apple’s rumored visual Siri refresh could leapfrog both by integrating directly with iOS camera. For now, Google owns the early-mover advantage.

Key Points

Search Live expands from US-only to 200+ countries overnight, powered by Gemini 3.1 Flash Live for multimodal reasoning.

Live headphone translation exits beta and lands on iOS, supporting 70+ languages while preserving speaker tone and rhythm.

All processing is on-device for translation, cloud-based for Search Live queries, with sub-two-second latency.

Local businesses must now optimize for AI-driven visual search as users point cameras instead of typing queries.

Rollout excludes countries where AI Mode is blocked, including China, Iran, and parts of the EU still finalizing AI Act compliance.

FAQs

No. Any Android or iOS phone running the latest Google app works. Headphone translation also supports any wired or wireless earbuds, not just Pixel Buds.

For Search Live, yes — queries route through Google’s cloud for processing. Translation audio stays entirely on your device.

Any region where Google AI Mode isn’t available, including China, Iran, and some EU countries pending AI Act compliance.

Google claims Gemini preserves speaker rhythm, emphasis and tone. Early tests show near-real-time latency with occasional idiom hiccups.

Not directly. Standard web opt-outs apply, but local listings and photos will still surface unless removed from Google Business Profile.

Source Reliability

27% of sources are highly trusted · Avg reliability: 71

Go deeper with Organic Intel

Our AI for Your Work systems give you practical, step-by-step guides based on stories like this.

Explore ai for your work systems